备注

点击 这里 下载完整示例代码

(beta) PyTorch中的Channels Last内存格式¶

Created On: Apr 20, 2020 | Last Updated: Oct 04, 2023 | Last Verified: Nov 05, 2024

作者: Vitaly Fedyunin

什么是Channels Last¶

Channels Last内存格式是一种对NCHW张量进行存储的新方式,保留维度顺序。Channels Last张量以使通道成为最密集维度的方式排列(即逐像素存储图像)。

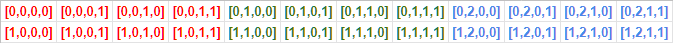

例如,经典(连续)存储NCHW张量(在此例中为两个具有3个颜色通道的4x4图像)如下所示:

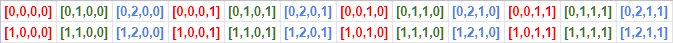

Channels Last内存格式以不同方式排列数据:

Pytorch通过使用现存的strides结构支持内存格式(并提供对现有模型的向后兼容,包括eager, JIT和TorchScript)。例如,Channels Last格式下的10x3x16x16批量的stride为(768, 1, 48, 3)。

Channels Last内存格式仅适用于4D NCHW张量。

内存格式API¶

以下是如何在连续和Channels Last内存格式之间转换张量。

经典PyTorch连续张量

import torch

N, C, H, W = 10, 3, 32, 32

x = torch.empty(N, C, H, W)

print(x.stride()) # Outputs: (3072, 1024, 32, 1)

转换操作符

x = x.to(memory_format=torch.channels_last)

print(x.shape) # Outputs: (10, 3, 32, 32) as dimensions order preserved

print(x.stride()) # Outputs: (3072, 1, 96, 3)

返回连续格式

x = x.to(memory_format=torch.contiguous_format)

print(x.stride()) # Outputs: (3072, 1024, 32, 1)

备选选项

x = x.contiguous(memory_format=torch.channels_last)

print(x.stride()) # Outputs: (3072, 1, 96, 3)

格式检查

print(x.is_contiguous(memory_format=torch.channels_last)) # Outputs: True

两个API to 和 contiguous 之间略有不同。我们建议在显式转换张量的内存格式时使用 to。

对于一般情况,两个API的行为是相同的。然而,对于4D张量,当大小为 NCHW 且满足以下条件时:C==1 或 H==1 && W==1,仅 to 会生成适当stride来表示Channels Last内存格式。

这是因为以上任何一种情况下,张量的内存格式是模糊的。例如,即使大小为 N1HW 的连续张量,其存储格式也是连续的同时也是Channels Last。因此,它们已经被认为是给定内存格式的 is_contiguous,因此调用 contiguous 不再会操作stride更新。相反,to 会通过有意义的stride重新设置张量,以正确表示目标内存格式。

special_x = torch.empty(4, 1, 4, 4)

print(special_x.is_contiguous(memory_format=torch.channels_last)) # Outputs: True

print(special_x.is_contiguous(memory_format=torch.contiguous_format)) # Outputs: True

显式API permute 的情况相同。在发生模糊情况时,permute 不保证生成完全反映目标内存格式的适当stride。我们建议使用带显式内存格式的 to 以避免意外行为。

需要注意的是,在极端情况下,当三个非批量维度都等于 1``(即 ``C==1 && H==1 && W==1),当前实现无法标记张量为Channels Last内存格式。

创建Channels Last

x = torch.empty(N, C, H, W, memory_format=torch.channels_last)

print(x.stride()) # Outputs: (3072, 1, 96, 3)

clone 保留内存格式

y = x.clone()

print(y.stride()) # Outputs: (3072, 1, 96, 3)

to, cuda, float … 保留内存格式

if torch.cuda.is_available():

y = x.cuda()

print(y.stride()) # Outputs: (3072, 1, 96, 3)

empty_like, *_like 操作符保留内存格式

y = torch.empty_like(x)

print(y.stride()) # Outputs: (3072, 1, 96, 3)

逐元素操作符保留内存格式

z = x + y

print(z.stride()) # Outputs: (3072, 1, 96, 3)

Conv, Batchnorm 模块使用 cudnn 后端支持Channels Last(仅适用于cuDNN >= 7.6)。卷积模块与二元逐元素操作符不同,Channels Last为主导内存格式。如果所有输入为连续内存格式,操作符将生成连续内存格式的输出;否则,输出将为Channels Last格式。

if torch.backends.cudnn.is_available() and torch.backends.cudnn.version() >= 7603:

model = torch.nn.Conv2d(8, 4, 3).cuda().half()

model = model.to(memory_format=torch.channels_last) # Module parameters need to be channels last

input = torch.randint(1, 10, (2, 8, 4, 4), dtype=torch.float32, requires_grad=True)

input = input.to(device="cuda", memory_format=torch.channels_last, dtype=torch.float16)

out = model(input)

print(out.is_contiguous(memory_format=torch.channels_last)) # Outputs: True

当输入张量到达一个不支持Channels Last的操作符时,内核中将会自动应用排列以恢复输入张量的连续格式。这会引入开销,并停止Channels Last内存格式的传播。尽管如此,它保证了正确的输出。

性能提升¶

Channels Last内存格式优化适用于GPU和CPU。在GPU上,在运行降低精度(torch.float16)任务时,NVIDIA的支持张量核硬件上观察到最显著性能提升。通过与连续格式对比,我们获得了超过22%的性能提升,同时还使用了’AMP(自动混合精度)’训练脚本。我们的脚本使用了NVIDIA提供的AMP https://github.com/NVIDIA/apex。

python main_amp.py -a resnet50 --b 200 --workers 16 --opt-level O2 ./data

# opt_level = O2

# keep_batchnorm_fp32 = None <class 'NoneType'>

# loss_scale = None <class 'NoneType'>

# CUDNN VERSION: 7603

# => creating model 'resnet50'

# Selected optimization level O2: FP16 training with FP32 batchnorm and FP32 master weights.

# Defaults for this optimization level are:

# enabled : True

# opt_level : O2

# cast_model_type : torch.float16

# patch_torch_functions : False

# keep_batchnorm_fp32 : True

# master_weights : True

# loss_scale : dynamic

# Processing user overrides (additional kwargs that are not None)...

# After processing overrides, optimization options are:

# enabled : True

# opt_level : O2

# cast_model_type : torch.float16

# patch_torch_functions : False

# keep_batchnorm_fp32 : True

# master_weights : True

# loss_scale : dynamic

# Epoch: [0][10/125] Time 0.866 (0.866) Speed 230.949 (230.949) Loss 0.6735125184 (0.6735) Prec@1 61.000 (61.000) Prec@5 100.000 (100.000)

# Epoch: [0][20/125] Time 0.259 (0.562) Speed 773.481 (355.693) Loss 0.6968704462 (0.6852) Prec@1 55.000 (58.000) Prec@5 100.000 (100.000)

# Epoch: [0][30/125] Time 0.258 (0.461) Speed 775.089 (433.965) Loss 0.7877287269 (0.7194) Prec@1 51.500 (55.833) Prec@5 100.000 (100.000)

# Epoch: [0][40/125] Time 0.259 (0.410) Speed 771.710 (487.281) Loss 0.8285319805 (0.7467) Prec@1 48.500 (54.000) Prec@5 100.000 (100.000)

# Epoch: [0][50/125] Time 0.260 (0.380) Speed 770.090 (525.908) Loss 0.7370464802 (0.7447) Prec@1 56.500 (54.500) Prec@5 100.000 (100.000)

# Epoch: [0][60/125] Time 0.258 (0.360) Speed 775.623 (555.728) Loss 0.7592862844 (0.7472) Prec@1 51.000 (53.917) Prec@5 100.000 (100.000)

# Epoch: [0][70/125] Time 0.258 (0.345) Speed 774.746 (579.115) Loss 1.9698858261 (0.9218) Prec@1 49.500 (53.286) Prec@5 100.000 (100.000)

# Epoch: [0][80/125] Time 0.260 (0.335) Speed 770.324 (597.659) Loss 2.2505953312 (1.0879) Prec@1 50.500 (52.938) Prec@5 100.000 (100.000)

添加 --channels-last true 可使模型在Channels Last格式下运行,并观察到22%的性能提升。

python main_amp.py -a resnet50 --b 200 --workers 16 --opt-level O2 --channels-last true ./data

# opt_level = O2

# keep_batchnorm_fp32 = None <class 'NoneType'>

# loss_scale = None <class 'NoneType'>

#

# CUDNN VERSION: 7603

#

# => creating model 'resnet50'

# Selected optimization level O2: FP16 training with FP32 batchnorm and FP32 master weights.

#

# Defaults for this optimization level are:

# enabled : True

# opt_level : O2

# cast_model_type : torch.float16

# patch_torch_functions : False

# keep_batchnorm_fp32 : True

# master_weights : True

# loss_scale : dynamic

# Processing user overrides (additional kwargs that are not None)...

# After processing overrides, optimization options are:

# enabled : True

# opt_level : O2

# cast_model_type : torch.float16

# patch_torch_functions : False

# keep_batchnorm_fp32 : True

# master_weights : True

# loss_scale : dynamic

#

# Epoch: [0][10/125] Time 0.767 (0.767) Speed 260.785 (260.785) Loss 0.7579724789 (0.7580) Prec@1 53.500 (53.500) Prec@5 100.000 (100.000)

# Epoch: [0][20/125] Time 0.198 (0.482) Speed 1012.135 (414.716) Loss 0.7007197738 (0.7293) Prec@1 49.000 (51.250) Prec@5 100.000 (100.000)

# Epoch: [0][30/125] Time 0.198 (0.387) Speed 1010.977 (516.198) Loss 0.7113101482 (0.7233) Prec@1 55.500 (52.667) Prec@5 100.000 (100.000)

# Epoch: [0][40/125] Time 0.197 (0.340) Speed 1013.023 (588.333) Loss 0.8943189979 (0.7661) Prec@1 54.000 (53.000) Prec@5 100.000 (100.000)

# Epoch: [0][50/125] Time 0.198 (0.312) Speed 1010.541 (641.977) Loss 1.7113249302 (0.9551) Prec@1 51.000 (52.600) Prec@5 100.000 (100.000)

# Epoch: [0][60/125] Time 0.198 (0.293) Speed 1011.163 (683.574) Loss 5.8537774086 (1.7716) Prec@1 50.500 (52.250) Prec@5 100.000 (100.000)

# Epoch: [0][70/125] Time 0.198 (0.279) Speed 1011.453 (716.767) Loss 5.7595844269 (2.3413) Prec@1 46.500 (51.429) Prec@5 100.000 (100.000)

# Epoch: [0][80/125] Time 0.198 (0.269) Speed 1011.827 (743.883) Loss 2.8196096420 (2.4011) Prec@1 47.500 (50.938) Prec@5 100.000 (100.000)

以下所有模型完全支持Channels Last,并且在Volta设备上表现出8%-35%的性能增益:alexnet, mnasnet0_5, mnasnet0_75, mnasnet1_0, mnasnet1_3, mobilenet_v2, resnet101, resnet152, resnet18, resnet34, resnet50, resnext50_32x4d, shufflenet_v2_x0_5, shufflenet_v2_x1_0, shufflenet_v2_x1_5, shufflenet_v2_x2_0, squeezenet1_0, squeezenet1_1, vgg11, vgg11_bn, vgg13, vgg13_bn, vgg16, vgg16_bn, vgg19, vgg19_bn, wide_resnet101_2, wide_resnet50_2。

以下所有模型完全支持Channels Last,并且在Intel(R) Xeon(R) Ice Lake(或更新)CPU上表现出26%-76%的性能增益:alexnet, densenet121, densenet161, densenet169, googlenet, inception_v3, mnasnet0_5, mnasnet1_0, resnet101, resnet152, resnet18, resnet34, resnet50, resnext101_32x8d, resnext50_32x4d, shufflenet_v2_x0_5, shufflenet_v2_x1_0, squeezenet1_0, squeezenet1_1, vgg11, vgg11_bn, vgg13, vgg13_bn, vgg16, vgg16_bn, vgg19, vgg19_bn, wide_resnet101_2, wide_resnet50_2。

转换现有模型¶

Channels Last支持不仅限于现有模型,任何模型都可以转换为Channels Last并通过计算图传播格式,只要输入(或某些权重)已正确格式化。

# Need to be done once, after model initialization (or load)

model = model.to(memory_format=torch.channels_last) # Replace with your model

# Need to be done for every input

input = input.to(memory_format=torch.channels_last) # Replace with your input

output = model(input)

然而,并非所有操作符都完全转换为支持Channels Last(通常返回连续输出)。在上述示例中,不支持Channels Last的层将停止内存格式的传播。尽管如此,我们已将模型转换为Channels Last格式,这意味着每个卷积层,其4维权重处于Channels Last内存格式,将恢复Channels Last内存格式并从更快的内核中受益。

但不支持Channels Last的操作符确实会通过排列引入开销。可选地,如果您希望优化已转换模型的性能,您可以调查并识别模型中不支持Channels Last的操作符。

这意味着您需要将所使用操作符的列表与支持操作符列表进行验证 https://github.com/pytorch/pytorch/wiki/Operators-with-Channels-Last-support,或者在Eager执行模式下引入内存格式检查并运行您的模型。

运行以下代码后,如果操作符的输出与输入的内存格式不匹配,操作符将抛出异常。

def contains_cl(args):

for t in args:

if isinstance(t, torch.Tensor):

if t.is_contiguous(memory_format=torch.channels_last) and not t.is_contiguous():

return True

elif isinstance(t, list) or isinstance(t, tuple):

if contains_cl(list(t)):

return True

return False

def print_inputs(args, indent=""):

for t in args:

if isinstance(t, torch.Tensor):

print(indent, t.stride(), t.shape, t.device, t.dtype)

elif isinstance(t, list) or isinstance(t, tuple):

print(indent, type(t))

print_inputs(list(t), indent=indent + " ")

else:

print(indent, t)

def check_wrapper(fn):

name = fn.__name__

def check_cl(*args, **kwargs):

was_cl = contains_cl(args)

try:

result = fn(*args, **kwargs)

except Exception as e:

print("`{}` inputs are:".format(name))

print_inputs(args)

print("-------------------")

raise e

failed = False

if was_cl:

if isinstance(result, torch.Tensor):

if result.dim() == 4 and not result.is_contiguous(memory_format=torch.channels_last):

print(

"`{}` got channels_last input, but output is not channels_last:".format(name),

result.shape,

result.stride(),

result.device,

result.dtype,

)

failed = True

if failed and True:

print("`{}` inputs are:".format(name))

print_inputs(args)

raise Exception("Operator `{}` lost channels_last property".format(name))

return result

return check_cl

old_attrs = dict()

def attribute(m):

old_attrs[m] = dict()

for i in dir(m):

e = getattr(m, i)

exclude_functions = ["is_cuda", "has_names", "numel", "stride", "Tensor", "is_contiguous", "__class__"]

if i not in exclude_functions and not i.startswith("_") and "__call__" in dir(e):

try:

old_attrs[m][i] = e

setattr(m, i, check_wrapper(e))

except Exception as e:

print(i)

print(e)

attribute(torch.Tensor)

attribute(torch.nn.functional)

attribute(torch)

如果您发现一个不支持Channels Last张量的操作符并希望贡献,请使用以下开发者指南 https://github.com/pytorch/pytorch/wiki/Writing-memory-format-aware-operators。

以下代码用于恢复torch的属性。

for (m, attrs) in old_attrs.items():

for (k, v) in attrs.items():

setattr(m, k, v)